Introduction

Three years ago today, Sam Altman made a rather low-key post on Twitter announcing the launch of this chatbot. He wrote, “Language interfaces will be important… This is an early demonstration, and there are many limitations—it’s very much like a research preview.”

At that time, we had no idea that this dialogue box would become the biggest variable in human society over the next three years.

Today is December 1, 2025. Three years have passed since that day.

In these three years, we have experienced the panic of “AI will destroy humanity” and the frenzy of “every industry will be reshaped.” Looking back from this point in time, the world has not been dominated by a Skynet-like entity as depicted in sci-fi movies, nor have we instantaneously entered a utopia as optimists predicted.

The world has changed. It has become more nuanced, more efficient, but also more confusing.

An Unexpected Start

Looking back, the birth of ChatGPT was itself full of serendipity.

In a March 2023 interview, the OpenAI team told MIT Technology Review that they initially had little confidence in the product before its release. Greg Brockman admitted that no one in the company believed it was “really useful.”

The team originally planned to focus on more vertical applications, but after those attempts failed, they decided to release this chatbot, fine-tuned from GPT-3.5, as a “research preview” to gather human feedback.

Jan Leike later recalled that the product’s viral spread left the team “overwhelmed.” John Schulman stated that in the days following the release, he kept refreshing Twitter, watching the screenshots of ChatGPT flood in, yet he couldn’t understand why a model with “many flaws” garnered such attention.

A product not intended to change the world ultimately did change the world.

Reality Check of the Scaling Law

At the outset of ChatGPT, discussions surrounding it were filled with grand predictions. Some worried that white-collar jobs would be fully replaced, while others believed that 2025 would mark the arrival of Artificial General Intelligence (AGI). Elon Musk predicted that by the end of 2025, “we will have AI smarter than any single human.”

Now, at the end of 2025, AGI has not arrived.

The so-called “Scaling Law”—which posits that simply piling on more computing power and data will continuously yield intelligence—has encountered significant real-world resistance. Gary Marcus pointed out in his three-year reflection that many promises of “10x productivity increases” have largely fallen flat.

Some studies have shown about a 30% efficiency increase, but a qualitative leap has yet to materialize. An article in The Economist bluntly stated that corporate adoption of generative AI appears “surprisingly sluggish.”

More importantly, the tech industry has hit a wall in the physical world over these three years.

When ChatGPT was first released, we thought the limitations to AI development were algorithmic; later, we believed it was the depletion of high-quality data. By 2025, the world had to admit that the ultimate barrier to AGI was actually electricity.

Over the past three years, global data centers’ power consumption has surged dramatically. As model parameters continue to expand, the energy issue has evolved from a technical cost to a fundamental constraint. Consequently, we note that today, Silicon Valley giants are investing not only in chip companies but also in nuclear power plants and fusion energy startups.

This physical constraint has also reshaped the industry’s technological direction to some extent. By 2025, “on-device AI” has gained increasing attention. Not every problem requires answers from large cloud models; this simple truth is being reconsidered seriously.

Mobile and chip manufacturers are striving to make devices smarter to handle more tasks locally without consuming expensive cloud computing resources each time.

The commercial reality has also cooled down.

Remember the near-manic “gold rush” of 2023 and 2024? Every company had to mention AI in their earnings reports, and every CEO was discussing an “AI-first” strategy. By the end of 2025, that enthusiasm is waning.

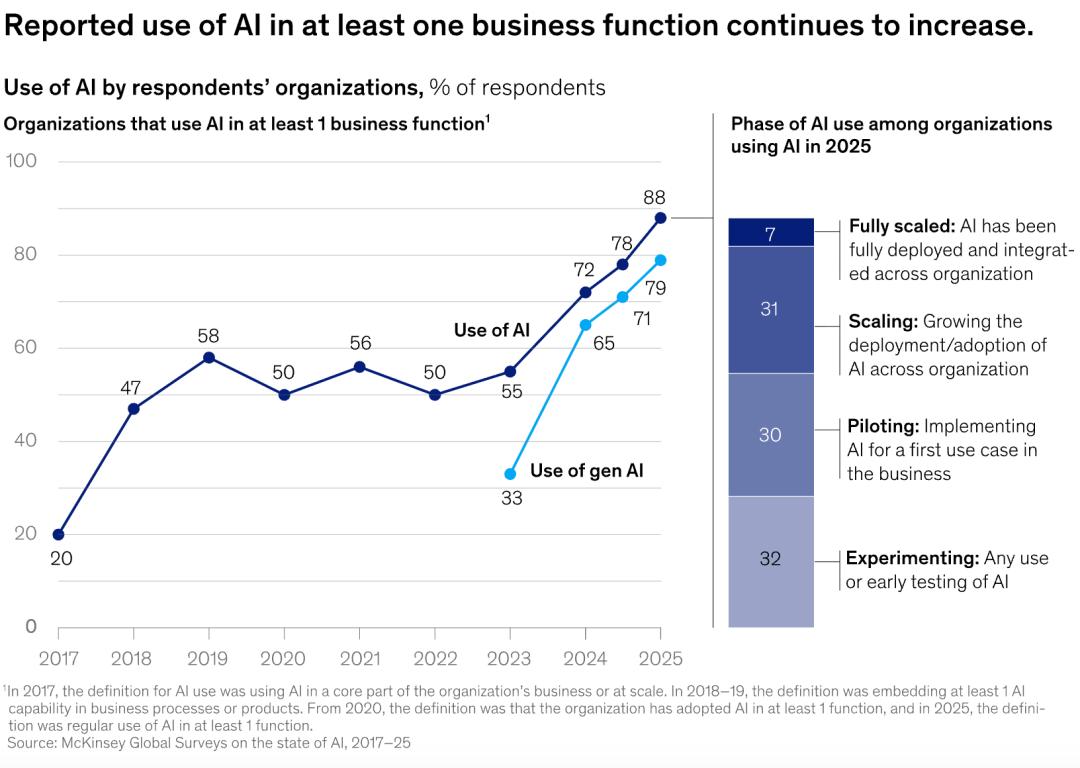

Despite ChatGPT boasting 800 million monthly active users, the actual adoption rate in enterprises tells a different story. According to the Federal Reserve and the Census Bureau, the proportion of people using generative AI daily in the workplace hovers around 12%, with little growth over the past year. McKinsey’s latest research shows that two-thirds of companies are still in the “pilot” phase, with only a handful able to derive more than 5% profit from AI.

Thus, debates about an “AI bubble” have been incessant in recent times.

The capital market is the most sensitive barometer. Just last month, Nvidia’s stock, which had been soaring, dropped 16%, and Oracle also saw a significant decline of about 26%. The market is beginning to realize that the narrative of “exponential growth” may have issues. While the investment in computing power is indeed exponential, the returns are facing diminishing marginal returns.

OpenAI itself is also under pressure. Once a pioneer, it is losing its moat. Google’s Gemini 3 has surpassed GPT-5 in several benchmark tests, and open-source models like DeepSeek and the Qwen series are making it increasingly cheap to “build your own large model.” The “winner-takes-all” scenario hinted at by Sam Altman has not materialized; instead, large language models are rapidly becoming commoditized.

What does this mean? For ordinary users, AI tools are becoming cheaper and more accessible; but for companies trying to profit from selling models, the future may be a tougher battle.

What Has Truly Changed

If the energy constraints are in the background, the most visible changes are happening at our fingertips.

Think back to the last time you faced a completely blank Word document or code editor, painfully trying to conceive the first word. When was that?

In 2025, “starting from scratch” has become a luxury, even regarded as inefficient in some high-pressure industries. Whether drafting documents, coding modules, or designing posters, AI has taken over the process of “going from 0 to 60.”

Almost all mainstream creative software now includes some form of AI assistance. We have collectively transitioned from content creators to editors, reviewers, and architects.

This indeed has led to increased efficiency. However, a side effect has been the dramatic proliferation of mediocre content, or what is termed “AI Slop.”

Today’s internet is dense with information, yet its nutritional density is declining. Opening social media or search engines, we are surrounded by a plethora of grammatically perfect, well-structured but hollow synthetic texts. These contents lack logical flaws, yet they are devoid of soul.

We are forced to develop a new reading ability, needing to discern the subtle “warmth” and flaws amidst a sea of repetitive phrases to identify which thoughts are genuinely human.

The changes in content generation have also sparked subtle issues in the workplace.

In 2023, people worried that AI would directly take jobs; by 2025, a more pressing concern may be another issue: AI is changing the path of career growth.

In the past, junior programmers gained experience by writing simple modules, and junior copywriters honed their language sense through extensive practice. These tasks can now largely be performed by AI. From the perspective of corporate efficiency, this is a good thing; but from the perspective of talent development, the issue becomes complex: when entry-level jobs are contracted out to AI, how will the next generation of senior talent grow? Without undergoing basic training, where will professional intuition and experience come from?

Meanwhile, the job market is showing a seemingly contradictory trend: the value of general skills is declining, while unique “human touch” has become increasingly scarce.

AI has lowered many technical barriers, but simultaneously raised the relative value of taste and judgment. Those possessing unique aesthetics, strong empathy, or deep insights in a niche field have found new competitive advantages in this environment. While AI can generate vast amounts of standardized content, it still has clear limitations in creating truly moving works.

Three Years Later

ChatGPT is three years old. It has not become a god; it has simply become the water, electricity, and coal of the internet. It is expensive and occasionally experiences outages, but we have indeed become dependent on it. This may not be the future we dreamed of, but it is the present we have.

If we were to compare it to human age, a three-year-old child has just begun to develop self-awareness, starting to explore the world while also being filled with uncontrollable emotions and chaos.

ChatGPT and the generative AI industry behind it are in such an awkward yet critical “growing phase.”

It is no longer the prodigy that could win applause with just a few witty remarks. It now carries the expectations of hundreds of billions of dollars, faces competitors that are even smarter (like Gemini 3), and must navigate increasingly stringent copyright, privacy, and ethical scrutiny.

Has the world changed? Yes.

Just not in the dramatic way depicted in sci-fi movies, but rather in a more gradual, everyday manner. We have learned to leverage AI to enhance efficiency at work while also remaining vigilant when it is unreliable. We no longer expect AI to suddenly replace everything as prophesied, nor do we worry as much about it suddenly overturning society. It now resembles a tool in our toolbox, alongside Photoshop and Excel.

This may not be the AGI utopia Sam Altman initially dreamed of, nor the apocalyptic collapse warned by Gary Marcus. But it could very well be a sign of technological maturity, when miracles become routine, and fervor turns into habit—the real change has only just begun.

Happy third birthday, ChatGPT. Welcome to the challenging real world.

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.