DeepSeek V3.2: A Powerful and Affordable Alternative

On December 1, DeepSeek announced the release of version 3.2, which is now available to all users. This update includes local deployment models uploaded to various open-source communities. According to official testing, DeepSeek V3.2’s inference capabilities are now comparable to OpenAI’s GPT-5, but at a significantly lower cost, which is exciting for many users.

Enhanced Inference at a Lower Cost

DeepSeek V3.2 comes in two versions: the free version available on the DeepSeek website and the API-accessible DeepSeek V3.2-Speciale. The Speciale version boasts enhanced inference capabilities and is designed to explore the limits of the model’s reasoning abilities.

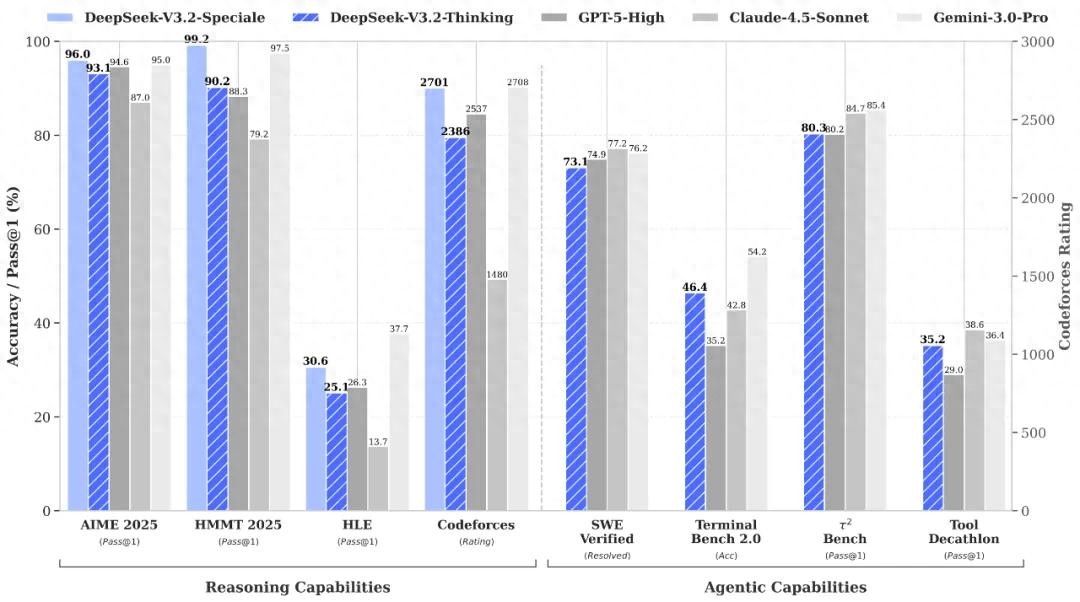

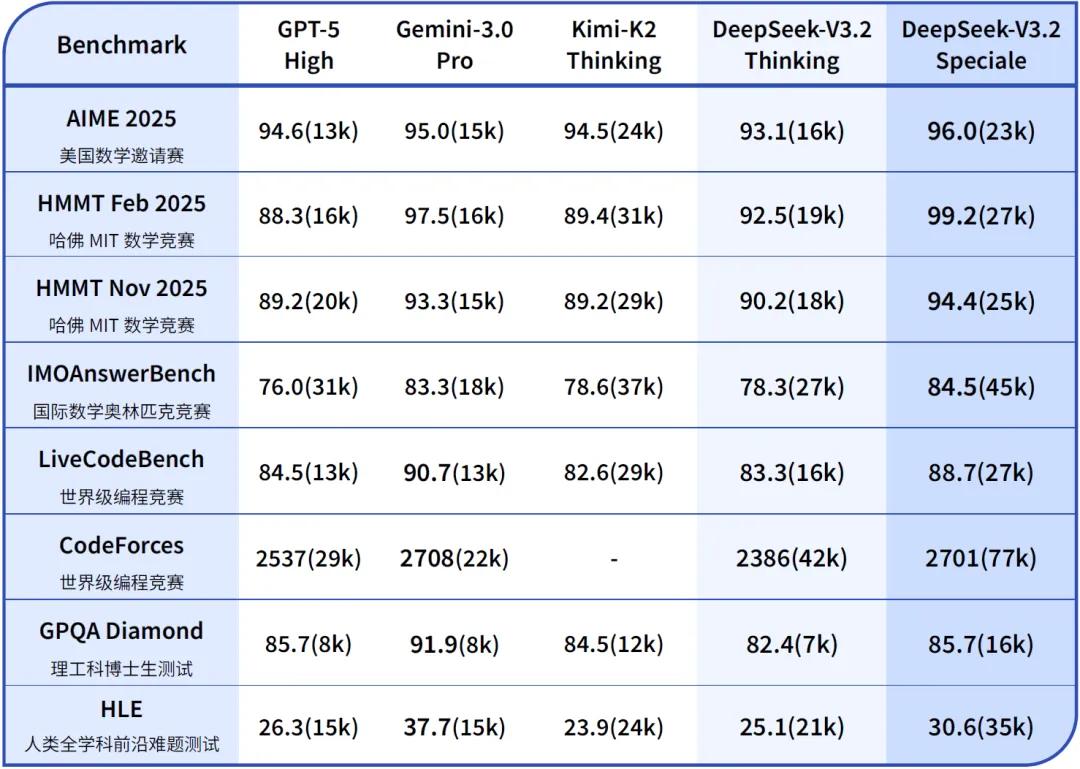

The V3.2-Speciale actively enters a “long-thinking enhancement” mode and incorporates the theorem-proving capabilities of DeepSeek-Math-V2, enhancing its instruction-following, mathematical proof, and logical verification abilities. In official tests, V3.2-Speciale’s performance on inference benchmarks rivals that of the latest Gemini-3.0-Pro.

DeepSeek also tested V3.2-Speciale on finals from prestigious competitions such as IMO 2025 (International Mathematical Olympiad), CMO 2025 (Chinese Mathematical Olympiad), ICPC World Finals 2025 (International Collegiate Programming Contest), and IOI 2025 (International Olympiad in Informatics), achieving gold medal results.

Notably, in the ICPC and IOI tests, it reached levels comparable to the second and tenth place human competitors, indicating significant advancements in programming capabilities. In head-to-head comparisons, DeepSeek V3.2-Speciale outperformed GPT-5 High, catching OpenAI off guard.

Technical Breakthroughs

The main breakthrough of DeepSeek V3.2 is the introduction of the DeepSeek Sparse Attention (DSA) mechanism, which addresses efficiency issues in AI models’ attention. Traditional attention mechanisms calculate associations between all elements in a sequence, while DSA selectively computes associations among key elements, significantly reducing the amount of data that needs to be processed.

Similar technology was hinted at in a paper earlier this year, where DeepSeek introduced a new attention mechanism called NSA. However, the NSA mechanism was not publicly implemented in subsequent updates, leading to speculation about potential difficulties. It now appears that DeepSeek has found a better implementation method. The DSA mechanism operates like a search engine, quickly scanning long texts to create a “lightning indexer” for efficient data retrieval, contrasting with NSA’s fixed-area search approach.

With DSA, the cost of 128K sequence inference can be reduced by over 60%, speeding up inference by approximately 3.5 times, and reducing memory usage by 70%, all without significantly degrading model performance.

Official data shows that during AI model testing on the H800 cluster, the pre-fill cost per million tokens dropped from $0.70 to around $0.20, while the decoding cost fell from $2.40 to $0.80, making DeepSeek V3.2 potentially the lowest-cost model for long-text inference among its peers.

Tool Utilization

In addition to the DSA mechanism, DeepSeek V3.2 allows AI models to utilize tools during their reasoning process without requiring training. This upgrade enhances DeepSeek V3.2’s general performance and better accommodates user-created tools due to its open-source nature.

To test DeepSeek V3.2’s new features, I designed several questions to evaluate its responses, starting with a reasoning task:

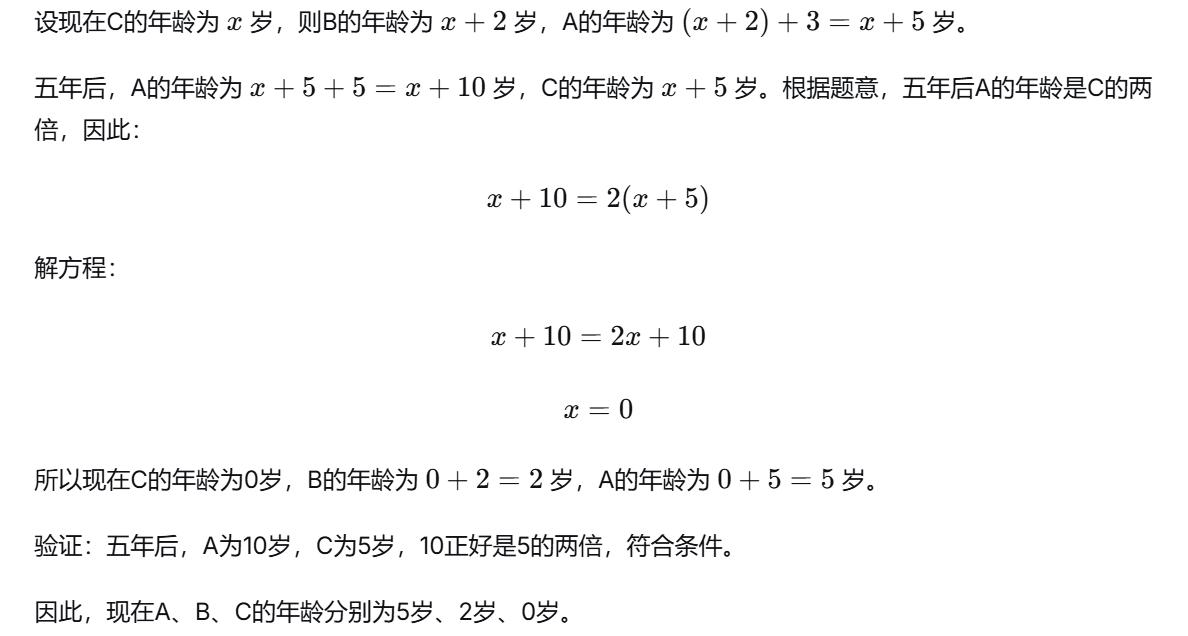

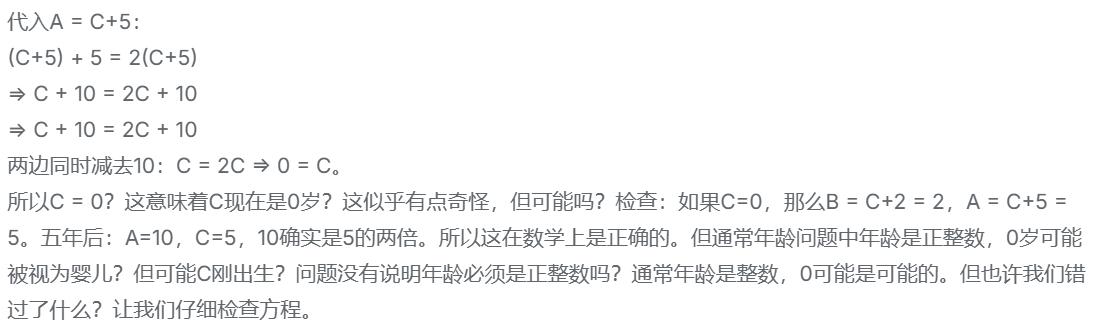

Question: A is three years older than B, and B is two years older than C. In five years, A’s age will be twice that of C. What are their current ages?

Answer:

The answer was correct, and the reasoning process involved multiple rounds of verification before arriving at the final answer.

DeepSeek verified the answer multiple times, ensuring accuracy under the DSA mechanism, which is crucial given the increased error probability associated with its sparse architecture.

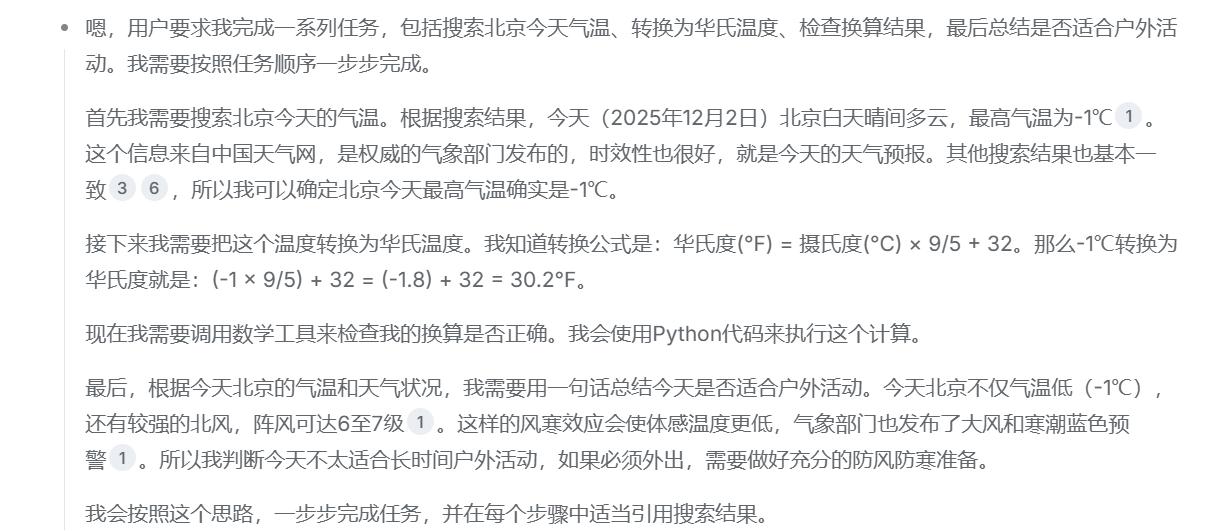

Next, I designed a multi-step task:

- Search for today’s temperature in Beijing.

- Convert the temperature to Fahrenheit.

- Use a tool to check the conversion accuracy.

- Summarize whether today is suitable for outdoor activities.

DeepSeek effectively understood the task and sequentially used search and mathematical tools to arrive at the answer:

The final answer was correct, and DeepSeek autonomously decided when to use the mathematical tool for verification, although it missed summarizing the suitability for outdoor activities. Nevertheless, it demonstrated the ability to make decisions about tool usage.

In contrast, another AI faced with the same question understood the need to “call tools” but resorted to directly searching for data instead of following the steps.

In DeepSeek’s tool usage tutorial, similar problems are presented, demonstrating how multi-turn dialogue and tool usage can improve answer quality. DeepSeek has evolved from merely recalling answers to breaking down problems, asking targeted questions, and utilizing various tools to provide comprehensive solutions.

A Strong Open-Source Contender

Is DeepSeek V3.2 powerful? Yes, but it does not have a clear lead. Testing results show it competes closely with GPT-5 High and Gemini 3.0 Pro. However, a model that can match these benchmarks while offering inference costs that are only a third or less of mainstream models and is fully open-source can disrupt the entire market. This is the fundamental logic behind DeepSeek’s ability to revolutionize the industry.

Previously, there was a common belief that “open-source models are always eight months behind closed-source models.” While this may be debatable, the release of DeepSeek V3.2 clearly challenges this notion. DeepSeek continues to advocate for full open-source access, especially with the introduction of DSA, which significantly lowers costs and enhances long-text capabilities, positioning open-source models as challengers rather than mere followers of closed-source giants.

The cost revolution brought by DSA will significantly impact the commercialization of AI models, as both training and inference costs remain high. A reduction of 60% in costs not only affects operational expenses but also lowers initial deployment costs, enabling even small enterprises to train more powerful models.

With lower costs for long-text interactions, advanced AI applications (agents, automated workflows, long-chain reasoning, etc.) will no longer be confined to enterprise markets but can be more effectively promoted for consumer use. This could greatly accelerate the trend of “AI tools replacing traditional software,” allowing AI to penetrate everyday use at the operating system level.

For ordinary users, it may simply seem like an additional free and useful model, but in a few months, you may notice significant improvements in AI experiences across various hardware and software, likely thanks to DeepSeek’s contributions.

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.